The AI Strategy Gap Nobody's Talking About

Why the smartest CTOs we know are stuck, and what the others are doing differently.

Why the smartest CTOs we know are stuck, and what the others are doing differently.

We run biweekly roundtables with heads of engineering and product at B2B SaaS companies. Different industries, different stages, different tech stacks. Over the last year, a recurring pattern emerges.

It goes something like this:

"We know we need to do something with AI. Pressure from leadership is real. Competitors are marketing AI features. Some of our engineers are already using tools on their own. But we don't have a plan. It's unstructured and unfocused. And honestly, we're afraid of picking the wrong thing."

If that sounds familiar, you're in very good company. And you're not behind. You're exactly where most CTOs at your stage are. The difference between the ones who break through and the ones who stay stuck isn't talent, budget, or technical skill. It's a gap that almost nobody is talking about.

Most of the CTOs we work with are technically capable. They've been reading about LLMs, experimenting with tools, thinking about where AI could help. Some have engineers who've built impressive prototypes. The capability isn't the problem.

The problem is that none of it has been translated into something leadership can fund, the team can execute, and the board can evaluate. There's a gap between "I have ideas about AI" and "here's a plan that connects AI to business outcomes, accounts for real constraints, and gives us something to measure in 30 days."

That gap is where credibility lives or dies.

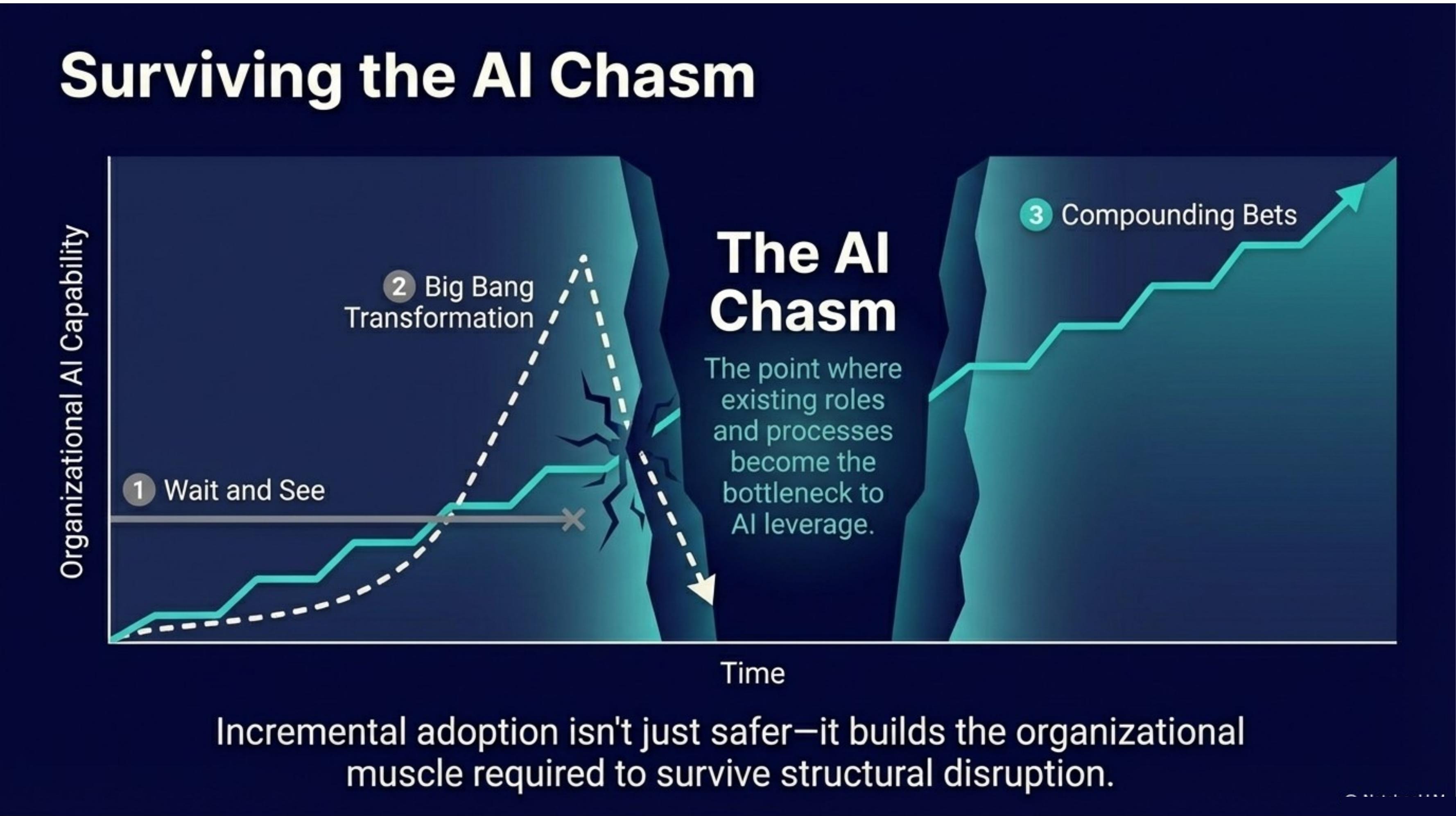

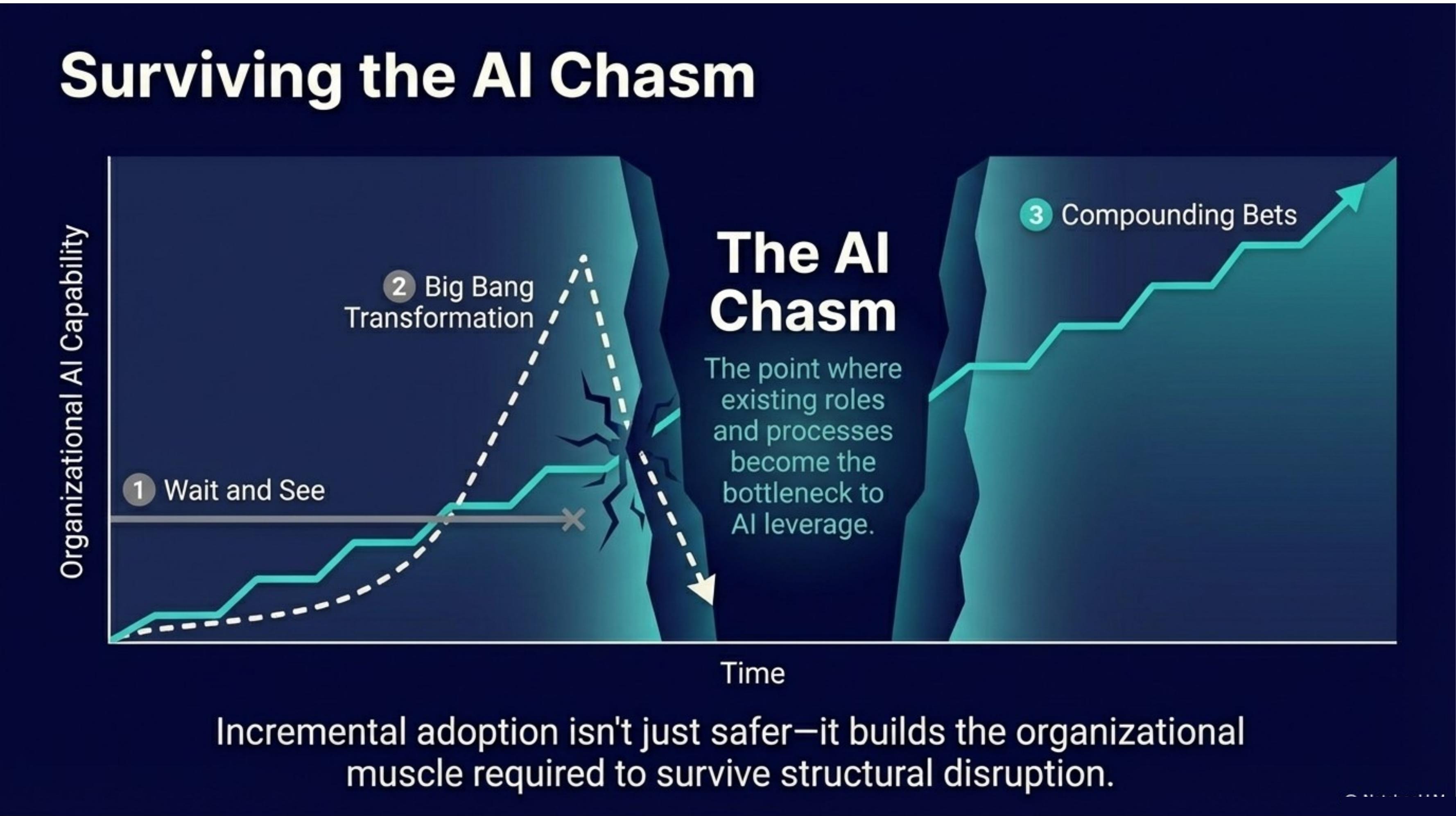

We've watched CTOs lose months in this gap. Not because they're doing nothing. Because they're doing everything. Reading, experimenting, evaluating tools, attending webinars, running proofs of concept that never leave the lab. Plus their pre-AI jobs. Lots of motion. No momentum. Meanwhile, the CEO is telling the board that AI is coming, the VP of Sales is forwarding competitive intel, and the engineering lead is pushing back because the team is already at capacity.

When the pressure builds, most leaders reach for one of six familiar moves. Each one is reasonable. Each one has worked in other contexts. In the current AI environment, each one tends to make things worse.

None of these moves are stupid. They're just insufficient. The gap they all share is the same one: they skip the alignment step.

The CTOs who are breaking through share a few patterns that are worth naming.

Here's an uncomfortable observation: the companies pulling ahead on AI aren't the ones with the best engineers or the biggest budgets. They're the ones where leadership got the alignment and focus right first. Where the CTO built a plan the CEO could champion, the VP of Engineering or Product could execute, and the team could believe in.

The technology is the easy part. The hard part is coordinating humans under pressure. Building consensus without waiting for certainty. Making a defensible bet when the ground is shifting under you. Translating technical possibility into business language that leadership can act on.

"AI isn't hard. Coordinating humans under pressure is hard."

That's a leadership challenge, not a technology challenge. And it's solvable.

A focused engagement that produces a defensible plan, aligned leadership, and a repeatable structure. In weeks, not quarters.

Request a Fit Call